Have you ever wanted to write about something, but felt that something 'chokes' you? Have you ever been stuck yourself? Well that's what happened to me with this article, where I want to show my point of view on triage and forensic imaging. I'm 'thick' (more than usual). I know what I wanted to say, but I didn't know very well how to approach it.

Some time ago I read a fantastic article by Brett Shavers (I strongly recommend you read it), titled "Forensic imaging raises its head", which he published following a ForensicFocus tweet. In that article, Brett sets out his opinion on the subject. Triage or imaging?

I fully share the same view as Brett in his article.

Personally, if I have the time, I have the resources, I have authority and I am qualified... I will make a forensic image. As always, all depending on the case, (if you can know what you are going to find before starting an analysis in a forensic investigation).

Before we continue, allow me to make an observation...

The acquisition of the memory dump is a priority task in any case in which it is necessary to intervene in a system, as I explained in a previous article, due to the great importance and great volatility of the data it contains. Therefore, it must be separated from other collection processes (such as triage), since they negatively affect the data stored in the memory. That is to say, the memory dump should not be carried out within a triage process.

Much has been written, in many places, about triage. It is not a field that I have delved into. It's not something I'm going to delve into in this article.

When a triage is carried out, only 'important' information is collected (enclosed in quotation marks because what is important information?), thus reducing the time of acquisition and subsequent analysis. Specific and necessary elements are sought in order to initiate a later, deeper and more exhaustive investigation. After all, when an incident is declared or a system needs to be intervened to carry out a previous examination of activity, quick data is needed.

If triage is successful, investigators will be able to extract relevant and necessary information for further processing.

Triage is undoubtedly a practical solution for dealing with time-critical research, reducing the volume of data. It's all about having an 'efficient' workflow. But what you may believe to be efficient is sometimes not the best, the most optimal.

For example, you might have to intervene in a system that is apparently involved in a misdemeanor (such as an intrusion into another small business system), so you choose to perform a triage. But when you start with the analysis you might see that this is a felony, (such as a terrorism or child pornography crime). By this I mean that you don't really know the scope of an investigation until you start it.

You have to find a balance.

The price to pay for rapid data extraction is data loss.At this point I am obliged to mention our friend Locard, because I have not seen him mentioned when talking about triage. I haven't seen anyone talking about something that, personally, I think is important. The noise.

There are plenty of tools for triage. Tools that make noise. Noise that can do a lot of damage to the investigator's eyes when he has to analyze a forensic image.

Noise is the massive and unnecessary alteration of data caused in a system that, in addition, can cause the loss of other data of interest. Loss of data of interest that I like to translate as 'pollution'.

You won't see that noise when you're analyzing the extracted data by performing a triage. But you will see it in the later creation of a forensic image.

As I said before, there are many tools to perform a triage. You have at your disposal small utilities, like NirSoft's own, (like LastActivityView or USBDeview). You also have your own SysInternals utilities (like Autoruns or TCPView). You have at your disposal linux distributions, focused on digital forensic analysis, which have another set of small utilities, such as Tsurugi Linux, (with Bento), or Caine, (with WinUFO in previous versions). You have at your disposal a lot of tools, that execute a set of small utilities, from third parties.

You also have to keep in mind that some of those tools that run another set of third party utilities can be detected as malicious and can trigger alarms.

And, in this situation, what do you do? Do you disconnect the antivirus? Be that as it may, that simple fact translates into an alteration, perhaps unnecessary, of the system.

It all depends on the case, because you must have a plan.

You must choose the right procedure and tool for each case.And this is where I want to end up. This is where I am going to present my opinion, with objective data. For this, (and after some headaches and other issues), I have chosen to perform a series of triage tests on a Windows 10 system, version 18343.1, virtualized under VirtualBox, with the following tools, (all of them free):

After installing the virtualized system, I plugged a USB device into the system and performed a single action. After that action, I saved the status of the virtual machine and created a clone for each of the above tools. I also created a clone to proceed with a normal system shutdown. Once the virtual machine clones were created, I advanced the system date and proceeded with the execution of the corresponding triage tool, disconnecting the virtual machine at the same time that each of the tools ends its execution. When I finished, I disconnected the original machine, as if I had cut off its power supply.

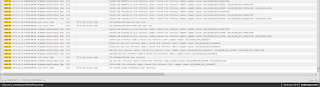

The acquisition process of each one of the mentioned tools has been monitored with the utility Process Monitor v3.50, excluding the execution and exit routes of the data, and including only the activity related to the processes involved in the operation.

In addition to this monitoring process that I have carried out, I have also made a super time line with Plaso, which I have later visualized with Timeline Explorer.

Next I show you my results, without going into assess what information extracts each of the tools.

AChoir

With the use of AChoir there have been a total of 235,904 events.

I make two more filters to see how the execution affects the system.

This tool has caused a total of 3.176 write events in files.

And a total of 2,936 file creation events.

After performing the timeline and showing only the lines between tool execution and completion, a total of 12,724 lines of activity are shown.

Live Response Collection BambiRaptor

With the use of BambiRaptor a total of 7,482,923 events have occurred.

I make two more filters to see how the execution affects the system.

This tool has caused a total of 15,237 write events in files.

And a total of 268,187 file creation events.

After performing the timeline and showing only the lines between tool execution and completion, a total of 46,384 activity lines are shown.

CyLR

With the use of CyLR there have been a total of 16,601 events.

I perform two more filters to see how the system is affected by the execution.

This tool has caused a total of 958 write events in files.

And a total of 113 file creation events.

After performing the timeline and showing only the lines between tool execution and completion, a total of 507 activity lines are shown.

FastIR

With the use of FastIR there have been a total of 26,386 events.

I perform two more filters to see how the system is affected by the execution.

This tool has caused a total of 1,712 write events in files.

And a total of 5,947 file creation events.

After performing the timeline and showing only the lines between tool execution and completion, a total of 6,420 lines of activity are shown.

KAPE

A total of 177,507 events have occurred with the use of KAPE.

I perform two more filters to see how the system is affected by the execution.

This tool has caused a total of 1,840 write events in files.

And a total of 6,455 file creation events.

After performing the timeline and showing only the lines between tool execution and completion, a total of 6,918 lines of activity are shown.

Conclusions

These triage tests have been carried out at the same time as a given action has been carried out. Imagine for a moment what you can find (or what you won't find) in a system where the time between an action and the intervention is hours, days, or even weeks.

Given the 'noise' that some tools are capable of generating, I am in favour of, whenever possible, acquiring a forensic image. There will be time to extract the artifacts that are needed.

A triage can be effective in some cases if the right tool or procedure is used. Keep in mind that what you think may be an appropriate option may result in an ineffective artifact classification procedure and, therefore, the prioritization of the case at hand.

Being fast in data extraction does not guarantee that you will extract the data necessary for your research. Find a balance. Plan your steps well, because you only get one chance to triage (unless you want to generate more noise and lose more data).

Efficiency isn't usually the fastest. When you intervene in a system, you don't really know what you can find in it.

If you use a tool, use the right one for each case. For example, the BambiRaptor Live Response Collection tool does not extract transactional logs from the Registry. However, CyLR or KAPE do extract them. For example, CyLR does not generate reports with data extraction, while KAPE does.

You should know that just as there are situations in which it is not possible to turn off the system to make a forensic image (so you have to acquire it with the live system), there are also situations in which you can disconnect the system to perform a triage (so unnecessary noise will be avoided). Disconnect. Do not turn off. Because the difference between one step and another is translated, in the same way, in loss of information.

For example, in this test, the system generated 178 lines at the start of the shutdown process, while when the system is disconnected, no activity is generated.

Updated: 20 May 2019

The sole purpose of this article has been to show that the tools we run generate noise during execution. It is true that much of that generated noise is read-only events. But that doesn't stop them from being events. Even within file writing and file creation events, the same writing and creation events are repeated about the same events. But those events are there.

As far as file writing and file creation events are concerned, I have to say that my main concern is that when you write to a file, that file increases in size and that when you create a file, you assign an entry in the MFT. If we find a case where a certain type of content has been deleted (call it 'X'), the increase in size of a file entails the assignment of a cluster that could be the cluster previously assigned to that deleted information. The case of the creation of a file also worries me because when a file is deleted, the system deletes its corresponding record in the MFT, which can be overwritten by that new file created, following the event of creating an archive.

I am also concerned about those events that occur in relation to the time that has elapsed since the incident. In other words, it is not uncommon to find incidents that have been going on for days or even weeks. And those events that occur can have repercussions on certain logs of the system. For example, '.evtx' events have, by default, a maximum value of 20480 KB.

Have I performed these tests to see what information may be lost, during the execution of a triage tool? No. I haven't done these tests, but it is possible that I will do so in the future. The purpose of this article is to show that the tools we run generate noise. Nothing more.

It is also true that, within all of these events, there will be events that may not directly influence the artifacts that we may find in a system. But that is something that will not be known until it is analyzed.

At no time do I intend to make a comparison of whether one tool is better than another. There are dozens of tools for triage. I haven't compared all of them. I've only done a few tests, (I think objective), on some of the tools I use the most.

There are tools that allow you to choose whether to collect artefacts or just reports, there are tools that allow you to choose which artefacts to collect and there are tools that do not allow you to choose anything beyond the execution of that tool itself. Therefore, during these tests, I have chosen to run each tool with its maximum options. Because it would not be fair to run a tool that allows minimum options with another tool that does not allow any option.

I don't like to recommend tools because each tool has its own site, depending on the case. I don't like pouring opinions on tools. I only like to talk about things that I have seen with my own eyes, (with my own tests). What is exposed here are only my tests.

It is the end user who must assess, and know, which tools are at his disposal, know how to choose the right one for each case and understand how it works, and the risks involved in its use, or not. In the end, everything depends on many factors, but it is the end user who has the last word, with his choice.

And all this, it's just my stuff. The things of a mere curious in matter.

That's all.

Marcos

No hay comentarios:

Publicar un comentario